Winner of the Spring 2018 StMU History Media Award for

Best Article in the Category of “Cultural History”

With the release of Windows 10 in 2015, Microsoft has been working with the Windows Operating System for over thirty years. We know Windows today as one of the most powerful and ubiquitous operating systems in the computer world. It has great features like Cortana and the File Explorer. Windows 10 also offers a large amount of accessibility, such as more tiles in the start menu and more communication with cloud applications, such as OneDrive and Dropbox. Windows’ powerful and easy-to-use style has made it the most used operating system in the world, with over one billion users daily. But that was not always the case, and as we will see, the Windows Operation System had very humble beginnings. Let’s go back to a time when microcomputers were just beginning, when they had limited functionality.

In the early 1980s, there was DOS, or Disk Operating System. When the early microcomputers of that decade were made, they contained many pieces of hardware that allowed the computer to turn on and process data, but the Operating System allowed the user to communicate with the computer and use its hardware to do things, like run a program, open and save files to a disk drive, or send data to a connected printer.1 The usage of DOS was very important in the development of computer technology. The Microsoft Disk Operating System (MS-DOS) was a widely used operating system that was initially sold with International Business Machines (IBM) microcomputers, starting with its flagship release of the IBM PC in August 1981. The difference between the microcomputers and the technology that came before them, is the microprocessor. These microcomputers are the same as today’s personal computers and laptops. We just call them “computers” today because it is shorter. The reason MS-DOS sold so well was because it was licensed to IBM, who used it in all of their microcomputers. Because of this, Microsoft received a commission on each microcomputer sold. While PC DOS (MS-DOS running on an IBM PC) was compatible with microcomputers other than IBM’s, in order to run PC DOS on other machines, many customizations had to be made. But thanks to IBM’s established name, and its marketing strategy, IBM PC sales were monumental. Its rapid growth was a boon for both IBM and Microsoft. When compared to operating systems we use today, DOS seems very crude. It was complicated to use and required quite a learning curve in specific commands and keywords in order to do anything. However, it was a necessary first stage in the further development of operating systems like Windows.

The microprocessor was a key component to the development of microcomputers. The microprocessor made it possible to have high processing power with little space consumption. It is common sense that computers use electricity, but the hardware in computers uses the electricity in different ways to solicit a response. Computer hardware is a necessary component for the computer to run, and before the microprocessor came to be, the hardware was not very space efficient. The issue of space was a problem for the people using computers at home and at work because the previous technology was even bigger. The company Intel, who was a large manufacturer of computer hardware, found no need for a microprocessor until it was approached by a Japanese calculator company known as Busicom. Busicom wanted to open a new line of calculators that were fast and could hold several circuits on different chips. Intel quickly realized that the job was too hard to handle for the size of their company at the time, but someone from their engineering staff imagined the idea of a central chip that managed static and dynamic memory simultaneously, the Microprocessor. Calculators are meant to be portable and compact, so naturally a calculator would need hardware that could run it, while maintaining portability. With that in mind, Intel created the 4004 microprocessor chip.2 The 4004 was a 4-bit chip and was not very successful, but its descendant, the 8088, was very successful as it was 8-bit and more powerful. The 8088 chip was the microprocessor chip that IBM used in its first line of microcomputers, which brought these two companies together for the first time. This powerful new technology, however, not only benefited IBM. It was also exactly what the company Xerox, a digital document tech producer, needed in order to change the world of computers forever.

Disk operating systems like Microsoft’s MS-DOS were only a stepping stone on the path to operating systems like Windows 10. Over at Xerox’s Palo Alto Research Center, Xerox was developing something huge. This something was so revolutionary in the world of computer science that we still use its technology today. Xerox was working hard on the idea of interactivity, a concept that the chief spokesman of Xerox, J.C.R. Licklider, had researched and written about. Interactivity allowed computers to operate in “real time.” Many of the things we use in our lives operate in real time, like cars, telephones, and lawnmowers. These things operate in real time because they respond instantaneously to people, which is a concept that computers had not had before the microcomputer age. Computers had operated in a mode called batch processing, meaning that the programmers wrote code to execute programs away from computers completely. Before DOS, coders had to punch code into special punch cards by the hundreds, one card for each line of code. Then those hundreds of cards were fed into a card reader connected to a mainframe computer, which then executed the commands of each card. If the program required data to manipulate, that data had to be punched into cards as well, and then read by the card reader. The machine that these cards were put into would read the spacing between each hole and compile each card that was input. This type of input made the computer and the user completely disassociated. The user did not interact with the computer. He or she rather interacted with the punch cards and the input machines. For a computer to run in “real time,” it would need input devices that would allow a user to have immediate access to command execution. With MS-DOS, one could access files and programs on a disk or tape in real time using the input device that transformed microcomputing: the keyboard.

The keyboard allowed for direct input into the microcomputers. When using DOS, words or phrases needed to be inputted to initiate functionality, which is why the keyboard was so important. When a key was pressed on the keyboard, a string of binary numbers was input to the computer. Each letter or character had its own 8-bit string, so the computer was programmed to read each individual string and print the character on the screen to the user. This was much closer to interactivity than the input devices previously, but it was still not at the level it could be. So, with the idea of interactivity, Xerox created the Alto, a personal computer that was the first computer to use a graphical user interface and a mouse.3 The mouse allowed for manipulation of the cursor on the screen to move items around and select files or icons that the user demanded. The mouse operated completely in real time; when moved, the cursor would move, and when the button was clicked, something was selected. Unfortunately, the men upstairs at Xerox did not continue to develop the Alto, but instead they shared them with their competitor, Apple Computers. Soon the Alto lost its success, and Xerox gave away its shot to be one of the major players of the microcomputer age. If Xerox had continued to develop their technology, they might have become the most successful company in the field. But after they gave the golden egg to Apple, the graphical user interface became the basis for a completely new type of operating system.

The Graphical User Interface (GUI) is used on every computer today. Instead of having to type many different lines of command keywords and phrases, which someone using DOS would have to do, the user simply selected an icon displayed on the screen to open an application like Microsoft Word, or play a game like Fortnite. Icons had been in use long before the introduction of GUI on computers. Map artists, for example, would use icons of planes to signify an airport in that location.4 With GUIs, icons received a different use; when using a smartphone, an iPhone for example, the user sees a screen with different icons on a screen. Since, the technology for touchscreens is an advanced form of GUI, the screen of the iPhone is graphically displayed so that the user can select an application in real time and see their screen change. The emphasis on real time is important because the idea of interactivity relies on real time. When looking at a screenshot of DOS and its functionality, it is easy to see why the GUI is much more efficient and pleasing to the user. Graphical user interfaces do not only have the use of displaying real-time action a screen, but it also allows the users to organize their computers to display files and objects in a way that is pleasing to them. Microsoft, Apple, and many other companies knew this and used it in the development of their next products.

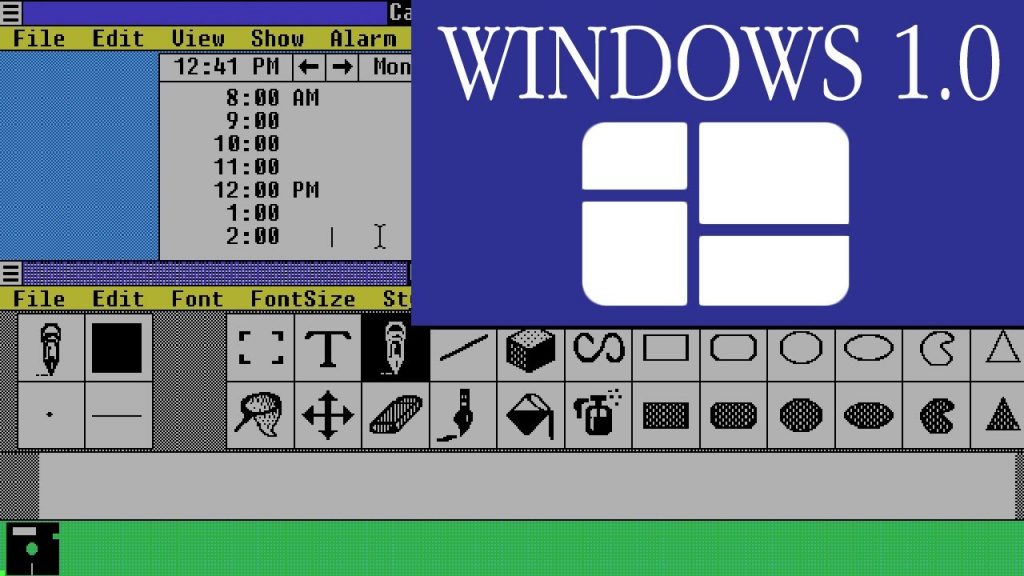

With the information they received from Xerox, Apple Computers integrated GUI into their software with the help of some former Xerox PARC employees. The news of Apple computers having pictures and a mouse spread quickly, and Microsoft’s PC DOS could not compete. Bill Gates was devoted to making computers “user-friendly.” He wanted computers to be accessible to the everyday user and not just to computer experts or to businessmen. His drive for a better computer led him to envision the “interface manager:” a new product that would be the same PC DOS core operating system, but with a graphical user interface shell to cover it, making the software easier to use and more efficient. This project was named Windows and it started with the version 1.0 in 1981, with applications being organized on the bottom of the screen. In 1982, this was changed to pull down menus and dialog boxes and it featured the ability to simultaneously display more than one document on the screen in “windows,” which is how it received its name.5 Windows was still in its development phase when the Apple Lisa was released in 1983. The Lisa featured a high resolution graphical interface sitting on top of the Apple disk operating system. Microsoft had no competing products. Thankfully, Lisa was not successful, but a year later, in 1984, the Apple Macintosh was released, which was designed to be lower in cost. It was the first commercially successful product to feature a multi-window graphical user interface. It used icons that looked like paper for files and icons of folders for the file directory. It also provided many tools to use that worked well with the graphical user interface. It showcased a “desktop,” which was a virtual area where programs and files could be stored to be easily seen by the user.6 When the mouse was connected, the cursor could be moved to manipulate objects and files by selecting them and moving them or opening them, all in real time!7 In the same year, Bill Gates and the Windows team added the mouse to Windows to increase capability, but their product was growing to be more complex as time went on. They needed to get their product ready to launch and it needed to be soon.

A large reason why the graphical user interface was so successful was because of the ease of access it gave to files in the directory. When using the computer, we download, save, delete, and edit files on a regular basis. If it is difficult to find the files we need, would we use the computer as much as we do? The GUI allows a graphical representation of the hierarchical file directory and many other programs on the computer. In DOS, the user can look up a file in the file directory using the DOS command dir, and the directory is shown as a list of files and folders with their addresses according to memory. DOS was very basic and the user needed a good deal of experience in order to use the computer properly. GUI on the other hand was intuitively easy to learn and use. It only made sense that a visual representation of something that you must use is much more effective than reading words and phrases on a screen.

1984 was the year Bill Gates announced the release date of Windows to the press; he set the launch date to be the end of 1984, but Windows was not completed until June of 1985.8 Windows 1.0 could not compete with the Mac’s operating system, but it was one step closer to what Windows would become. Windows 2.0 was more of a competition, but Apple tried to file a lawsuit for copyright infringement, since Microsoft used the same idea of overlapping windows instead of tiles. Despite setbacks, Windows was starting to become a commonly used product for its compatibility. Windows could be run on many different types of machines from many different manufacturers, and many companies wanted their computers to use Windows. Microsoft received so much growth and profit that it started to dominate the market. In 1990 Windows 3.0 was released, and it was far more advanced than the Macintosh in functionality and went on to sell close to ten million copies in two years.9 Windows could be marketed and sold to many different PC manufacturers, and since Apple did not license its OS, it took a huge blow. With its product being used by the majority of manufacturers, Microsoft decreased the cost of microcomputers dramatically, almost putting Apple Computers out of business. If it weren’t for the Macinotosh’s effectiveness for graphic design and its use in schools, Apple could have gone bankrupt. Before it became the business giant in consumer electronics, Microsoft dealt with the challenge of developing Windows which spurred innovation and economic growth for the company.10 Since Windows 3.0, Windows has continued to develop and is still a widely used product around the globe.

- Salem Press Encyclopedia, 2013, s.v. “Computer operating systems,” by John Panos Najarian. ↵

- Gordon E. Moore, “Intel-memories and the microprocessor,” Daedalus Volume, 125 (1996): 55. ↵

- Douglas K. Smith and Robert C. Alexander, Fumbling the Future (New York: William Morrow and Company, Inc.,1988), 57-60. ↵

- Blake Ives, “Graphical User Interfaces for Business Information Systems,” Volume, 6 (1982): 36. ↵

- Salem Press Encyclopedia, 2013, s.v. “Microsoft Releases the Windows Operating System,” by Marcia B. Dinneen and Jonathan E. Dinneen. ↵

- Salem Press Encyclopedia, 2013, s.v. “Introduction of the Apple Macintosh,” by R. Craig Philips. ↵

- Philip G. Stein, James R. Matey and Karen Pitts, “A Review of Statistical Software for the Apple Macintosh,” The American Statistician Volume, 51 (1997): 69. ↵

- Salem Press Encyclopedia, 2013, s.v. “Microsoft Releases the Windows Operating System,” by Marcia B. Dinneen and Jonathan E. Dinneen. ↵

- Salem Press Encyclopedia, 2013, s.v. “Microsoft Releases the Windows Operating System,” by Marcia B. Dinneen and Jonathan E. Dinneen. ↵

- Marshall Phelps and David Kline, Burning the Ships (New Jersey: John Wiley & Sons, 2009), 166. ↵

59 comments

Gabriella Parra

This article was great at illustrating the story of Windows and explaining the technological background in a clear to understand way. I had never considered that either Windows or Apple struggled to get a footing in selling their technology. Especially since they are huge companies today. And thank goodness for interactivity! I definitely have a new found appreciation for my computer!

Pedro Lugo Borges

That was an interesting article no wonder they got the best article award it was interesting to see that these two technology giants were competing with each other and the history of how computers are how we are was very interesting. Reading this reminds me of the movie “pirates of silicon valley” and it’s named that way because they build off each other . You don’t think about it now but back then IBM was really big and i still see some of there brick and mortar shops but i didn’t know it had that much of and impact or how we could of been using xerox but that they gave up.

Nicholas Burch

I really enjoyed reading this article because the author does a great job of story telling. Nathan explains the innovation of the computer through basic terms that everyone can understand. I never realized how often software companies would connect to form revolutionary products. I think it’s because of the misconception that competitions are cut-throat and can’t involve collaboration with the opposing team. Without the help of Xerox, Apple may not have had the same success with microcomputers as they do today. Collaboration can play a factor in innovation.

Michael Lazcano

I actually had no idea that windows was created form the program/operating system DOS. I only knew DOS as this system that could be used for coding, and today is used to sometimes emulate older games. Technology back then was so much more confusing in my opinion and for someone to write that much code and evolve It over the years is extraordinary. As we use out computers today we don’t even think of the technology and hard work it took to become what we have today. This article really made me appreciate everything that went into making windows, and technology in general.

Bruno Lezama

As a Windows user, I find this article interesting. The article helped me understand the background story of this platform that I use every day of my life. I can’t believe how the technology, which Microfost used to create Windows, seems so old now. This a clear example of how fast technology is improving. I knew that Microsft competed with Apple; however, this article gave me more details about this. Good article.

Zachary Fisher

It’s really interesting to see an article showing the beginnings of computer operating systems as we know them today. It’s interesting to see how different companies would take someone’s idea and try to make it better. I knew that Microsoft and Apple were fundamental in creating the first graphical user interface, but I’ve never heard of Xerox. It’s interesting to think what the technology business would look like it Xerox never shared their technology with Apple.

Andrew Gonzales

This article really shows in fine detail the evolution and struggles of the computer with its evolution. Allowing Microsoft and many other companies to advance as well with the technology. Working with so many different companies for so many years its incredible to think of the journey of technology and where it’ll take us.

Fatima Navarro

Oh those days when we used Floppy Disks to store our information and save our work. I cannot believe how much technology has advanced, and how much more it will keep evolving. Being born in the early 1990’s, I’m in between two eras. The one who saw the first Macintosh, and the one who saw the revolution of ipads. Great article! I thoroughly enjoyed it.

Thomas Fraire

This article was really interesting and it is really cool to see where the computer and internet wave kind of kick-started outside of Macintosh. It is crazy that he worked 27 years with so many different companies to grow and be successful. It is really cool to see how wide his impact was, this article was extremely well written and I enjoyed it.

Makenzie Santana

Reading this article really made me appreciate the technology we have today, knowing how windows came to be and how it caused a chain reaction of the kind of software we use today. Today we are almost completely dependent on modern computers especially after graduating high school. I have owned this laptop for 5-6 years and have never needed to use it as much as I do now since I started attending college.