The year was 1971, and Robert Noyce had created what many were dreaming to do: an invention that would revolutionize technology. This invention, a silicon-based integrated microchip, would allow humans to control electrical energy more precisely, and serve as the foundation of most electronic devices we know today. But the path to using silicon to create these small devices was not as easy as it seems.

Silicon is an important component of many minerals and is the second most abundant element, making up 27.7 percent of the Earth’s crust. With the chemical symbol Si, and the atomic number 14, it is the seventh most abundant element in the universe. Because of its abundance, silicon is considered as one of the elements necessary for life to exist as we know it.1 Although silicon is not found in its free state in nature, it does appear in various forms, including in sand, quartz, and rock crystal, among many others. It is also found in outer space, more specifically in the Sun and the stars.2

Although the glassmaking process continued to be refined, humans did not use silicon as a pure substance for thousands of years. It wasn’t until 1787 that Antoine Lavoisier (1743-1794), a famous French chemist, first identified silicon as an element in rocks. Despite its abundance, however, chemists after Lavoisier took a long time to study and learn to use this element. In 1823, almost 40 years after its initial discovery, Swedish chemist Jöns Jacob Berzelius (1779-1848) was finally able to isolate silicon in the form of a metalloid element. This was not only a major discovery in the field of chemistry, but it also allowed for more research and exploration of properties and potential applications of silicon.6

The 1950s gave rise to transistors because of international confrontations in the mid-20th century, including World War II, the Korean conflict, and the resulting Cold War.10 These events, although terrible in many ways, mobilized some of America’s greatest scientific minds. Electronics and their application were placed at the front line of the war effort to defend the nation’s values. Innovation rose from urgency, giving scientists and engineers the opportunity to explore the use of minerals, including the role of silicon. One of the major outcomes of this research was the beginnings of the digital computer, an example of an invention that began as part of the war effort, but was later marketed as a commercial product. As scientists began to explore the basic idea of a computer, they quickly identified the need for a better way to control electricity, and small, energy-efficient transistors were an obvious choice.

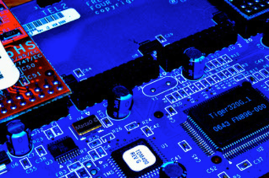

From the creation of transistors and the need to commercialize them, researchers began to focus on creating more compact, yet more powerful devices. This “shrinking” of the transistor meant finding a way to add more of them onto an electronic product to increase their function.12 For technology companies, it was important to make them work better, faster, and in a much more complex way than just flipping a light switch. It was not until 1959 that Robert Noyce (1927-1990), an American physicist, was able to use silicon to create the first monolithic integrated circuit or microchip. This device was comprised of a set of fast, small and inexpensive electronic circuits that allowed a finer control of electricity.13

In reality, it wasn’t only Robert Noyce who invented the microchip. Another electrical engineer, Jack Kilby (1923-2005) at Texas Instruments, independently invented almost identical integrated circuits at nearly the same time. The success of these two scientists allowed for the creation of the “Monolithic Idea”, which integrates all the parts of an electronic circuit into a single(“monolithic”) block of semiconductor material, in this case, silicon. The availability of this resource allowed these two scientists to create what would be the building block of digital computer technology, and what would facilitate generations of innovation.14 One example of an innovation that came with this invention was Moore’s Law, which came after Gordon E. Moore, co-founder of Intel, predicted that the number of transistors that can be packed into a given unit of space will double about every two years, increasing their speed and capability while the cost is reduced by half.15 Today, fifty years later, Moore’s Lay continues to be a guide for technologists around the world, and the pace doesn’t seem to be slowing down. This prediction is what pushed society to transform technology and computing from a rare and expensive experiment into an affordable necessity. Everyday software that we see today like internet, social media, analytics, etc, all sprang from the semiconductor industry and the foundation of Moore’s Law.16

| Courtesy of Wallpaperflare.com 17

Silicon, an abundant element and a fundamental component of many natural occurring minerals, has developed into a key player in society as we know it today. Discoveries of early civilizations and human history trace the use of silicon in quartz as a key element to make glass and other building materials that are still in use today. More recent developments have used the physical and electrical properties of silicon to establish it as a key player in the advancement of computer technology. These advances, including the creation of the transistor, allowed the better working technology and electronic applications to be developed. The power of silicon is undoubtedly far-reaching, not just because of its natural abundance, but as a driving force for the economy. So vital is this element, that a whole region, Silicon Valley, has been named after it, housing some of the most well known companies today. Although it may not be a household name, the role that silicon has played can be recognized as the building block of technology.

- Pappas S. Facts About Silicon. Live Science. 2018 Apr 27 (accessed 2020 Sep 2). https://www.livescience.com/28893-silicon.html ↵

- Hobart D, Smith J. Periodic Table of Elements: Los Alamos National Laboratory. Los Alamos National Laboratory: Silicon. 2011 (accessed 2020 Oct 29). https://periodic.lanl.gov/14.shtml ↵

- Needpix.com. Glass Making (Accessed 2020 October 09); https://www.needpix.com/photo/765683/glass-glass-artist-glass-blowing-glassblowing-heat-artist-art-glass-object-crafts ↵

- The Development of Glassmaking in the Ancient World. In: Encyclopedia. 2020 (accessed 2020 Oct 29). (Science and Its Times: Understanding the Social Significance of Scientific Discovery). https://www.encyclopedia.com/science/encyclopedias-almanacs-transcripts-and-maps/development-glassmaking-ancient-world ↵

- Castro J. Silicon or Silicone: What’s the Difference? livescience.com. 2013 Jun 20 (accessed 2020 Oct 29). https://www.livescience.com/37598-silicon-or-silicone-chips-implants.html ↵

- Targets S. How was Silicon discovered? | History of Silicon. SAM Sputter Targets. 2018 (accessed 2020 Oct 29). http://www.sputtering-targets.net/blog/how-was-silicon-discovered-history-of-silicon/ ↵

- WikimediaTransistors from the 1950s.(Accessed 2020 October 09); https://upload.wikimedia.org/wikipedia/commons/thumb/5/5e/1950_NPN_General_Electric_Transistors.jpg/220px-1950_NPN_General_Electric_Transistors.jpg ↵

- Ward JA. A Survey of Early Power Transistors. 2007 (accessed 2020 Oct 29). http://www.semiconductormuseum.com/Transistors/LectureHall/JoeKnight/JoeKnight_EarlyPowerTransistorHistory_Transitron_Page3.htm ↵

- Michael Riordan. The Lost History of the Transistor. IEEE Spectrum: Technology, Engineering, and Science News. 2004 Apr 30 (accessed 2020 Oct 29). https://spectrum.ieee.org/tech-history/silicon-revolution/the-lost-history-of-the-transistor ↵

- Maliniak L. 1950s: Transistors Fill The Vacuum: The Digital Age Begins. 2002 Oct 20 (accessed 2020 Oct 29). https://www.electronicdesign.com/markets/defense/article/21772292/1950s-transistors-fill-the-vacuum-the-digital-age-begins ↵

- Intel. Robert Noyce, Intel’s co-founder and the co-inventor of the integrated circuit. (Accessed 2020 October 09); https://www.intel.com/content/www/us/en/history/museum-robert-noyce.html ↵

- Beiser V. The Ultra-Pure, Super-Secret Sand That Makes Your Phone Possible. Wired. 2018 Aug 7 (accessed 2020 Oct 30). https://www.wired.com/story/book-excerpt-science-of-ultra-pure-silicon/ ↵

- Alchin LK. Who Invented the Microchip? Inventions and Inventors. 2017 Jan (accessed 2020 Oct 30). http://www.who-invented-the.technology/microchip.htm ↵

- Alchin LK. Who Invented the Microchip? Inventions and Inventors. 2017 Jan (accessed 2020 Oct 30). http://www.who-invented-the.technology/microchip.htm ↵

- Tardi C. Moore’s Law Explained. Investopedia. 2020 Aug 27 (accessed 2020 Nov 5). https://www.investopedia.com/terms/m/mooreslaw.asp ↵

- Sneed A. Moore’s Law Keeps Going, Defying Expectations – Scientific American. 2015 May 19 (accessed 2020 Nov 8). https://www.scientificamerican.com/article/moore-s-law-keeps-going-defying-expectations/ ↵

- Wallpaperflare.com. Silicon Valley (Accessed 2020 October 09); wallpaperflare.com/preview/171/420/51/san-francisco-san-francisco-skyline-salesforce-tower-salesforce.jpg ↵

- Alchin LK. Who Invented the Microchip? Inventions and Inventors. 2017 Jan (accessed 2020 Oct 30). http://www.who-invented-the.technology/microchip.htm ↵

12 comments

Marcus Saldana

I had no clue silicon was this important. The article did a great job talking about the development of silicon throughout history. My favorite part was of course the silicon valley. It brought together some of the biggest companies in the world today such as google. I wonder what other element scientist have yet to rediscover in the way silicon has been done.

Rhys Kennedy

The development of silicon and its role within society was an interesting read. We have a variety of materials that have heavy value due to our modern society, but we, more often than not, do not question the role or the development of these materials. Given that it was refreshing to hear about the history of silicon.