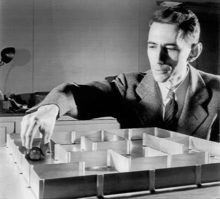

In Bell Laboratories in 1955, John McCarthy edited a series of a colleague’s essays called the Automata Studies. Here he learned about a new potential field of study, machine learning. Being fascinated with mathematics, McCarthy was instantly intrigued. He realized that this was the future of machines, and he began to research it, alongside Claude Shannon. They knew that they had to do all they could to create what is now called artificial intelligence, and have it officially recognized by scientists.1

McCarthy was born in 1927, in Boston, but his parents lost their house due to the Great Depression, which led them to move to Los Angeles. He had a bright mind, as at age fifteen, he taught himself Calculus by reading a textbook from the California Institute of Technology, where he was admitted at age sixteen. Although he dropped out of college at first, after spending time drafted in the military, he realized that he needed change. He went back to finish college with a Bachelor of Science degree in Mathematics, and then found himself a job at Bell Laboratories. There McCarthy met Claude Shannon, a writer studying machine learning. Sharing the same passion, together they finished the Automata Studies, which were essays on intelligent machines. This marked the first step in the creation of AI.2

Arthur Samuel was an inspirational figure to McCarthy as he began his pursuit of intelligent machines. McCarthy described Samuel as “a pioneer of artificial intelligence research.”3 Samuel was a professor who studied machine learning and would work on early versions of AI, such as an AI checkers player. Once published, the Automata Studies gained the attention of a few scientists who had a common interest, as well as the Rockefeller Foundation, who saw this as a potential investment. While writing a research proposal, McCarthy created the term Artificial Intelligence; however, he originally wanted to name it Computational Intelligence. This was because of people’s doubts about computers showing intelligence. Proving the existence of computer intelligence to the public became a challenge that McCarthy had to overcome.4

To help efficiently research and test AI, McCarthy created the LISP programming language. This was the first “functional” programming language to feature mathematical notions of functions, which is the ability to take in arguments while allowing return values and fully recursive functions, a procedure that uses itself to complete a task. This meant that data would be more easily manipulated in the code. LISP is still used today as the main language for programming AI. McCarthy created a memo that proposed modifications to an operating system that led to timesharing. This is important as it made program development easier, allowing for more efficient development for AI. In 1956, McCarthy and Shannon joined a ten-person research project to study the relationship between language and intelligence. This meeting led to the recognition of AI as its own field of study. After establishing this new field, their next goal began to focus on laying the foundations and advancing research in AI. They would discuss multiple topics centered around AI, such as the theory of computation, neural networks, and computational creativity. The theory of computation discusses problems being solved through algorithms. Neural networks are used to create learning algorithms. Computational creativity is the simulation of creativity through the usage of computers.5

In 1959, McCarthy wrote the article “Programs with Common Sense.” This article was crucial in introducing the idea of AI to scientists; the article elaborated on the foundations of AI, which helped in bringing attention to the field as more computer scientists would begin looking into the field. Years later, McCarthy created the Stanford Artificial Intelligence Laboratory. This laboratory would create many of the industrial robots that are found today. In 1961, Samuel created the first AI anthology. AI was also tasked to play a checker player, in which the AI won, helping McCarthy reach his goal.6 A year later, John became a computer science professor at Stanford University, where he also taught AI. A machine beating a human was unheard of. As a result, the public began to realize the potential of intelligent machines. Inspired by the triumph of AI, John started seeing the pattern between programming and human logic. This led him to establish long-lasting logical foundations for AI. He states that “All behaviors must be representable in the system.” Changes in behavior should be expressed with little effort. The machine should always work towards improving its behavior. The machine must learn the idea of partial success, as progress to improving may involve failure or long periods without success. The machine must create subroutines in its procedures while isolating subroutines that are beneficial under certain conditions.7 This is the basis for what John McCarthy and his coworkers would follow. These principles are also what AI scientists follow when working in the field even today. With the foundations of AI established, John looked toward the future of AI and its long-term goal. John states that the goal of AI is achieving human-level AI.

In his article “From here to human-level AI,” McCarthy explains the process to take to advancing AI. He begins by debunking previous definitions of human-level AI, then explains human-level AI to be like a race between two goals, and they will both succeed. The two main ways of achieving human-level AI are to first have a full understanding of human intelligence for AI to simulate; the other method is to have AI perform human challenges. Common sense is the basis for AI. A program needs to know facts and reasoning based on their surrounding, such as knowing the shape of a building. Giving a computer full logic has proven difficult, as common sense is imprecise, and mathematical logic is precise, and these two often conflict with one another. John McCarthy recommends another approach, replacing mathematical logic, which allows for imprecision and keeps the common sense aspect of AI. AI needs to determine whether something is true, false, or neither. In other words, it needs to understand concepts, something without a definite truth, and respond accordingly. Common sense is nonmonotonic, as its imprecisions may lead it to make decisions it wouldn’t have, if given more information. Giving AI elaboration tolerance is important. It is equivalent to our human thinking process. We are able to take in information while evaluating a situation; meanwhile, a computer would normally take in information and then restart its thinking process to register the new information. AI needs to be able to form context, which can help “outer logical language needs.” AI needs reasoning about events, which requires elaboration tolerance. An example John McCarthy uses is: “wearing clothes is a precondition for airline travel, but the travel agent will not tell his customer to be sure to wear clothes.” AI needs introspection and self-awareness in order to examine itself, as well as make decisions. A problem between human and computer performance is heuristics, mental shortcuts. McCarthy believes the cause to be “our present inability to give programs domain and problem-dependent heuristic advice.” Those who seek to improve AI follow these criteria, and slow progress is surely being made. As humans learn more about how the brain works while also improving AI, achieving human-level AI does seem like a race, as John McCarthy stated.8

McCarthy believes the best hope to achieve this is through logical AI, the “formalizing of commonsense knowledge and reasoning in mathematical logic.”9 Simulating the human nervous system, specifically the neural network, was seen as another alternative. John’s most recent research helped define the current-day creativity of machines, elaboration tolerance, efficient ways of solving calculus, and even machine free will.10 Although John McCarthy died on October 24, 2011, before human-level AI was achieved, he would be proud knowing that his legacy of AI lives on. Today AI has become a core pillar in the functions of today’s technology. Facial recognition is one of the major implementations of AI in almost all technology we see today; phones, security measures, and identification software all rely on AI. AI is also relied on in many fields of study, such as in the medical field to identify and diagnose patients. Thanks to John McCarthy, technology continues to evolve through AI.

- Christopher Leslie, “John McCarthy,” in Salem Press Biographical Encyclopedia (Salem Press, 2021). ↵

- Christopher Leslie, “John McCarthy,” in Salem Press Biographical Encyclopedia (Salem Press, 2021). ↵

- John McCarthy and Edward A. Feigenbaum, “In Memoriam: Arthur Samuel: Pioneer in Machine Learning,” AI Magazine 11, no. 3 (September 15, 1990): 10, https://doi.org/10.1609/aimag.v11i3.840., 1-2. ↵

- S.L. Andresen, “John McCarthy: Father of AI,” IEEE Intelligent Systems 17, no. 5 (September 2002): 84–85, https://doi.org/10.1109/MIS.2002.1039837. ↵

- Mitchell Marcus, “The 2003 Benjamin Franklin Medal in Computer and Cognitive Science Presented to John McCarthy (Stanford California). John McCarthy’s Multiple Contributions to the Foundations of Artificial Intelligence and Computer Science,” Journal of the Franklin Institute 341, no. 3 (May 2004): 215, https://doi.org/10.1016/j.jfranklin.2003.12.023. ↵

- John McCarthy and Edward A. Feigenbaum, “In Memoriam: Arthur Samuel: Pioneer in Machine Learning,” AI Magazine 11, no. 3 (September 15, 1990): 10, https://doi.org/10.1609/aimag.v11i3.840., 1-2. ↵

- Mitchell Marcus, “The 2003 Benjamin Franklin Medal in Computer and Cognitive Science Presented to John McCarthy (Stanford California). John McCarthy’s Multiple Contributions to the Foundations of Artificial Intelligence and Computer Science,” Journal of the Franklin Institute 341, no. 3 (May 2004): 215, https://doi.org/10.1016/j.jfranklin.2003.12.023. ↵

- John McCarthy, “From Here to Human-Level AI,” Artificial Intelligence, Special Review Issue, 171, no. 18 (December 1, 2007): 1174–82, https://doi.org/10.1016/j.artint.2007.10.009., 1174-1749. ↵

- John McCarthy, “The Future of AI — A Manifesto,” AI Magazine 26, no. 4 (December 15, 2005): 39, https://doi.org/10.1609/aimag.v26i4.1842. ↵

- Christopher Leslie, “John McCarthy,” in Salem Press Biographical Encyclopedia (Salem Press, 2021). ↵

7 comments

Ben Kruck

This is a well written article. It was interesting to read about John McCarthy and Artificial Intelligence. I didn’t know that AI was thought of and being designed that long ago. AI is very important in modern culture since it is constantly evolving, and acts as the next generation of technology. This is a well detailed article with a good structure as well.

Dylan Vargas

This article introduces the founder of ai and what comes to the process of making it and what John McCarthy thinks about it. For instance when he starts talking about common sense with the AI making it try to be more human level thinking it becomes difficult. The article and the way the story it tells is very intriguing for me and it gives me all I need to know and more. The challenges and where they wanted to take AI.

Jordan Davenport

Artificial intelligence is so interesting to me, and it is virtually in every technology in our lives, and it is constantly evolving. I appreciate the in-depth history of John McCarthy, the father of AI. He had a dream, and lived every day of his life pursuing it. It is outstanding that he taught himself calculus at 15 years old. He had ideas way ahead of his time, never gave up in his pursuit and I’m glad he was able to live and see how his thoughts and dreams became a reality. The rapid technological advances in human history, which John McCarthy had a huge impact on

Cassidy Colotla

Gosh AI is such an interesting topic. Especially because how far it’s going it’s so amazing it’s almost unsettling. To think what McCarty started at all is unbelievable. It was interesting to know how much goes into an eye and how it needs a lot of tolerance and planning in order to form context. It’s funny hearing words like common sense be referred to technology. You did such great research and I wish your luck for your nomination!

Paula Ferradas Hiraoka

Hello Ruben,

First of all, congratulations on your nomination and getting your article published!

I love the detail in this article. Artificial intelligence is something that is incredibly prominent in almost all of our lives, and is sure to be one of the lead technologies of the future. I really do enjoy the information presented here, all the sources were reliable and the pictures used are amazing.

Overall, good article and good luck!

Alex Trevino

Truly remarkable. Artificial intelligence is something that is incredibly prominent in almost all of our lives, and is sure to be one of the lead technologies of the future, so it’s really impressive to see where it began. McCarthy is honestly someone I had not heard of before and it is very refreshing to hear about the start of something that not many discuss. I really do enjoy the information presented here, and hope to see more.

Madeline Chandler

This was an extremely well-written article, and it was so detailed. I love the detail in this article. It was so interesting because I do not have much knowledge about the progression of Artificial Intelligence and John McCarthy. This article really is thought-provoking because electronic systems are designed to have common sense with further knowledge like language and complex mathematical notions. If it was not for John McCarthy and his extensive knowledge regarding Artificial Intelligence, our technology probably would not be as advanced today.